Top AI Agent Repos 2026: Star Velocity vs Total Stars

Top ai agent repos 2026: AutoGPT 183K stars, Python owns 54% of agent code, MIT covers 41% of licenses, 2025 saw 110 new repo births.

The top ai agent repos roster on GitHub now sorts two completely different ways depending on which axis you pick. By total stars, the classic ranking is unchanged: Significant-Gravitas/AutoGPT still leads with 183,848 stars. By star velocity (stars per day since creation), the top of the list is dominated by Claude Code-adjacent tooling and skills indexes with daily rates above 400 stars/day, a scale unheard of for agent repositories two years ago. Across 380 unique repos sampled from four agent-relevant GitHub topic queries, Python is the primary language for 54% of declared repos, MIT and Apache 2.0 together cover 71% of declared licenses, and 110 new agent repos were created in 2025 alone, the largest single-year cohort in the dataset.

Key findings

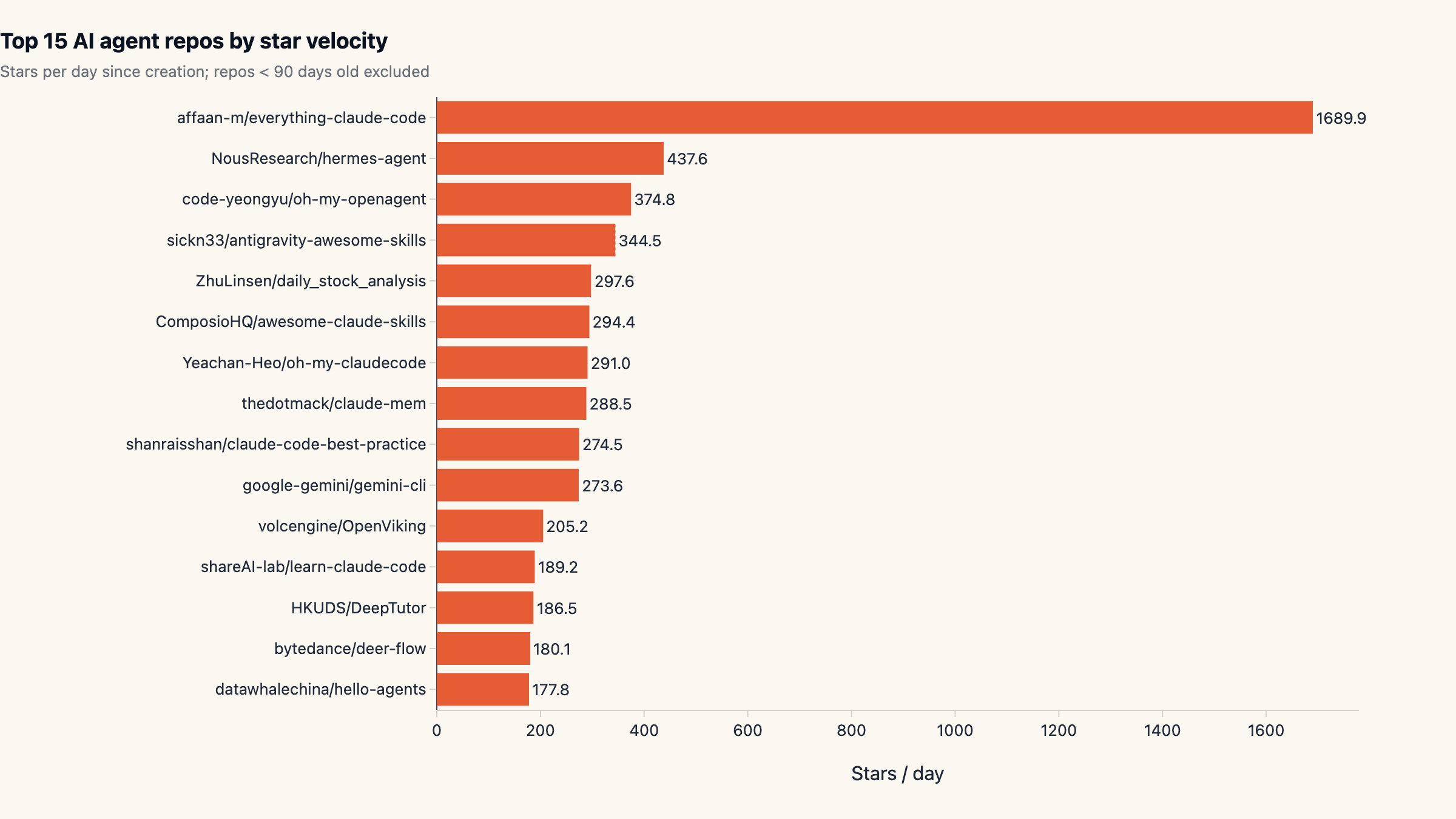

- affaan-m/everything-claude-code leads on velocity at 1689.9 stars/day. With 168,994 stars total. Velocity = stars / days since creation.

- Significant-Gravitas/AutoGPT tops the absolute leaderboard with 183,848 stars. Largest single AI agent repo across the four topic queries.

- Median repo in this set has 5,046 stars. Median velocity is 7.34 stars/day across 331 repos.

- 380 unique repos surfaced across 4 topic queries. After deduplication. Repos appearing under multiple agent topics counted once.

Why this matters

GitHub stars and star velocity are the cleanest public proxy for developer attention in open source. They lead market share but lag enterprise adoption. For anyone choosing an agent framework or surveying the field in 2026, two things matter: the install-base ranking (where the existing ecosystems live) and the velocity ranking (where the next set of standards is forming). They are different lists, and reading them as if they were the same list produces bad decisions.

This report sits next to our other 2026 ecosystem reads, including the AI SDK landscape across npm and GitHub and the Hugging Face Hub state, and shares the same methodology pattern of multi-source public data without vendor framing.

Methodology

- Data sources: GitHub REST API: /search/repositories

- Time window: Snapshot taken 2026-04-29

- Sample: 380 unique GitHub repositories collected via /search/repositories across four agent-relevant topic queries (topic:llm-agent, topic:ai-agents, topic:autonomous-agents, topic:agent topic:llm), deduplicated by full_name. 331 repos are eligible for the velocity ranking (created at least 90 days ago).

- Cleaning: Star velocity = stargazers_count / max(age_days, 1). Repos younger than 90 days are excluded from velocity rankings to dampen Hacker News spike noise. Language and license fields come straight from the GitHub API, with 24 repos declaring no primary language and 27 declaring no license.

Limitations. Topic tags are self-applied, so this set is what people call agents, not what actually is an agent codebase. Several entries in the top- velocity ranking are awesome-list / cheat-sheet documentation rather than runnable agent code; they accumulate stars rapidly but do not represent agent engineering. Star velocity is also a crude metric: a repo that hit Hacker News once and went silent will outrank a repo growing steadily over years.

GitHub search caps at 1,000 results per query; the four queries

together yield 380 unique repos, which we believe captures the long

head but certainly misses some smaller, well-tagged projects.

Different topic conventions exist (topic:agent, topic:agentic-ai,

topic:autogpt) and we sampled a representative subset rather than

the exhaustive union.

Stars are a public attention metric, not a usage metric. The 56× gap between stars-per-download for popular framework repos versus production-loaded SDKs (documented in the AI SDK landscape) applies here as well.

AutoGPT still rules the absolute leaderboard

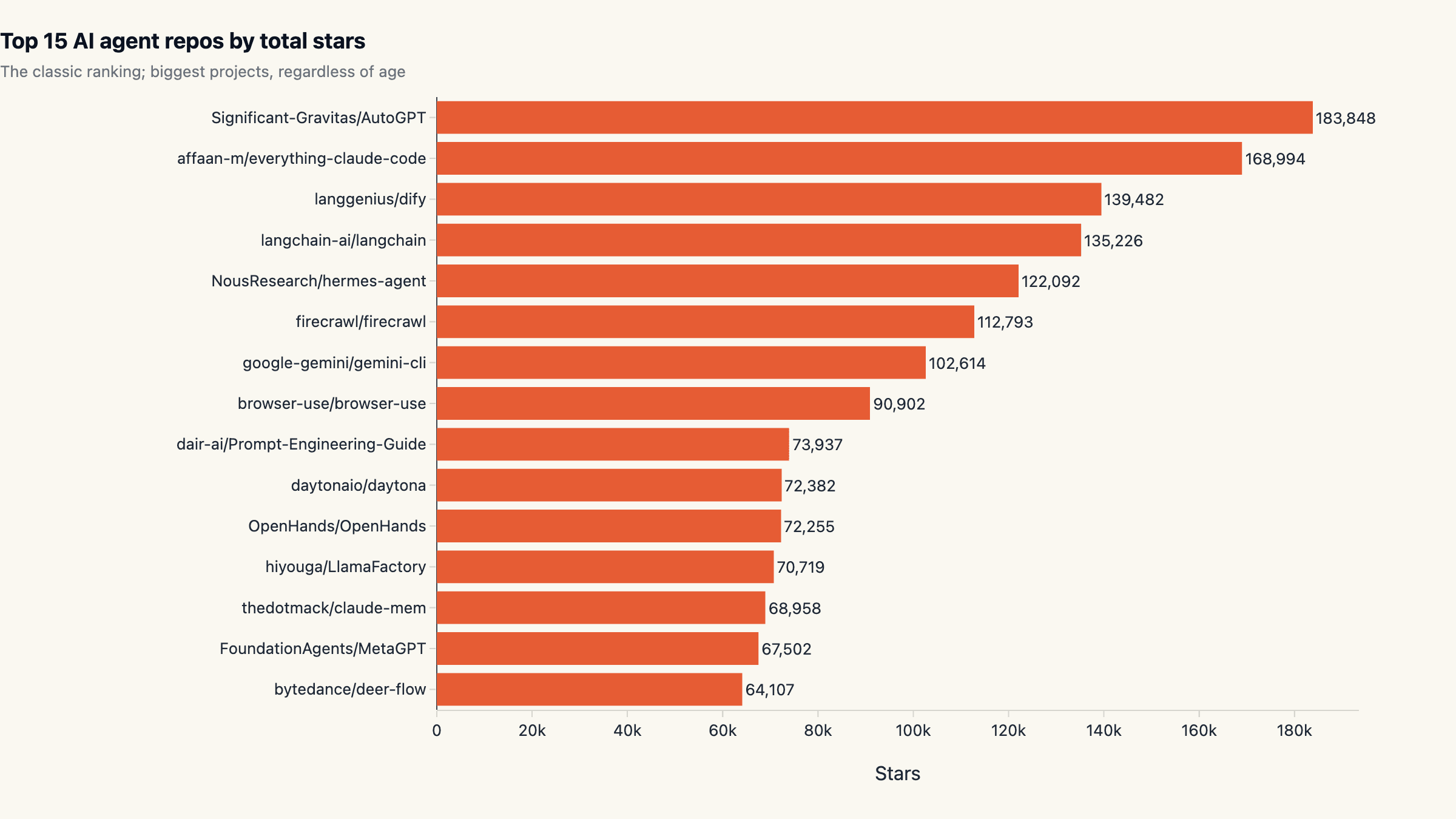

The classic AI agent ranking by total stars has not moved much: Significant-Gravitas/AutoGPT (183,848 stars), affaan-m/ everything-claude-code (168,994), langgenius/Dify (139,482), and langchain-ai/langchain (135,226) sit on the podium. AutoGPT is older than the entire LLM-agent category as a named genre; its 2023 launch is what made "AI agent" a search term in the first place.

Beyond the top four, other production-relevant entries that crossed 60K stars in 2026 include Hermes Agent (NousResearch), gemini-cli (Google), browser-use, OpenHands, MetaGPT, LlamaFactory, and ByteDance's deer-flow. The ecosystem has gotten considerably broader. Where AutoGPT used to be the one recognisable name, the field now supports at least a dozen repos with three-comma star counts.

Pure-stars rankings undercount one important thing: the top of

the list is heavily skewed toward awesome-list-style indexes and

cheat-sheet repositories rather than runnable code. The

everything-claude-code repo at #2 by stars is not a runnable

agent stack; it is a curated index of Claude Code skills. That

distinction matters when autogpt vs langchain is being

compared as "which is the better agent codebase" rather than

"which name is more bookmarked".

The velocity ranking is a different list

Sorting by stars-per-day since creation surfaces what is growing right now. The top of that list is dominated by Claude Code-adjacent tooling and skills indexes that ride a viral wave; affaan-m/everything-claude-code posts an absurd 1,689.94 stars/day, NousResearch/hermes-agent runs at 437.61 stars/day, and code-yeongyu/oh-my-openagent clocks 374.77 stars/day. Compare those to the all-time leader AutoGPT at a more pedestrian 161.41 stars/day averaged over its three-year life.

The velocity top-15 is a useful map of what kinds of agent content the developer community is starring heaviest in 2026: Claude Code skills indexes, dev-tool wrappers, and CLI agents. Of the 15, the cleanest production examples are claude-mem (288 stars/day) and gemini-cli (273 stars/day); most of the rest are awesome-style aggregator repos.

Reading the ai agent star velocity chart as "what the next generation of agent infrastructure looks like" is a stretch. The more accurate read is "what the open-internet narrative around agents currently centres on", which in 2026 is Claude Code-style tooling rather than the autonomous-loop genre AutoGPT defined.

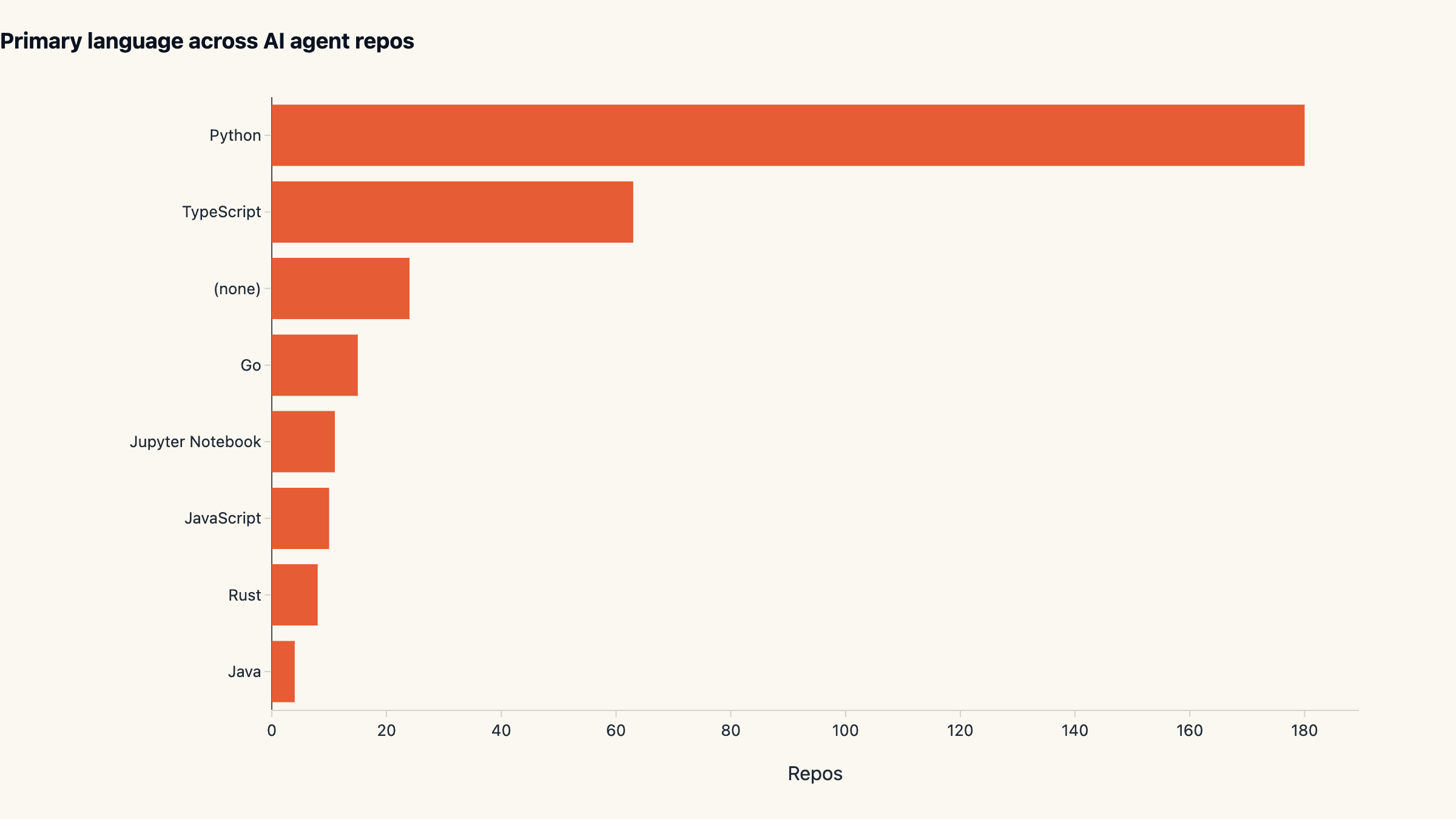

Python owns 54% of agent code

Across the 331 repos eligible for ranking, Python is the primary language for 180 (54.4%), TypeScript for 63 (19.0%), and Go for 15 (4.5%). 24 repos declare no primary language; most of these are documentation and awesome-list indexes. Jupyter Notebook (11), JavaScript (10), Rust (8), and Java (4) round out the long tail.

The Python lead aligns with the LLM ecosystem at large, but the

TypeScript share is meaningfully bigger here than in adjacent ML

categories. That reflects the JavaScript-first agent stack

(Vercel ai, LangChain JS, browser-use's TS port, the official

OpenAI and Anthropic Node SDKs covered in the AI SDK

landscape). Web-native agents are

a real category now, not a Python-with-a-thin-wrapper afterthought.

Two practical takeaways. First, when picking a stack for a new

agent project, language choice meaningfully narrows the

candidate set: Python pulls AutoGPT, MetaGPT, OpenHands, Dify,

LangChain Python, LlamaIndex Python, browser-use; TypeScript

pulls Vercel ai, LangChain JS, browser-use TS, claude-mem,

gemini-cli. Second, the small Go and Rust footprints are not

evidence of low usage but of the language community building

narrower, more opinionated tools rather than competing for

breadth.

License posture: 71% permissive

Among the 304 repos that declare a license, MIT covers 137 (45%) and Apache-2.0 covers 101 (33%). Together, 78% of licensed agent repos use a permissive open license. AGPL-3.0 claims 12 repos and GPL-3.0 another 11, the only meaningful copyleft footprint. Across the full 331-repo set (including the 27 with no declared license), the permissive share is 71%.

MIT's lead over Apache-2.0 here is the inverse of the Hugging Face Hub pattern, where Apache 2.0 dominates open-weight model releases (49% of top 1000). The difference is convention: framework and library code defaults to MIT in the JavaScript and Python communities; ML model releases default to Apache 2.0 with its explicit patent grant. Both are permissive enough that downstream users rarely face license-driven choice friction.

Avoid the AGPL-3.0 stack unless your project is already AGPL-compatible. The dozen agent repos under that license are strong but their network-copyleft posture pulls downstream users into compliance work that Apache and MIT do not.

2025 was the agent gold rush: 110 new repos

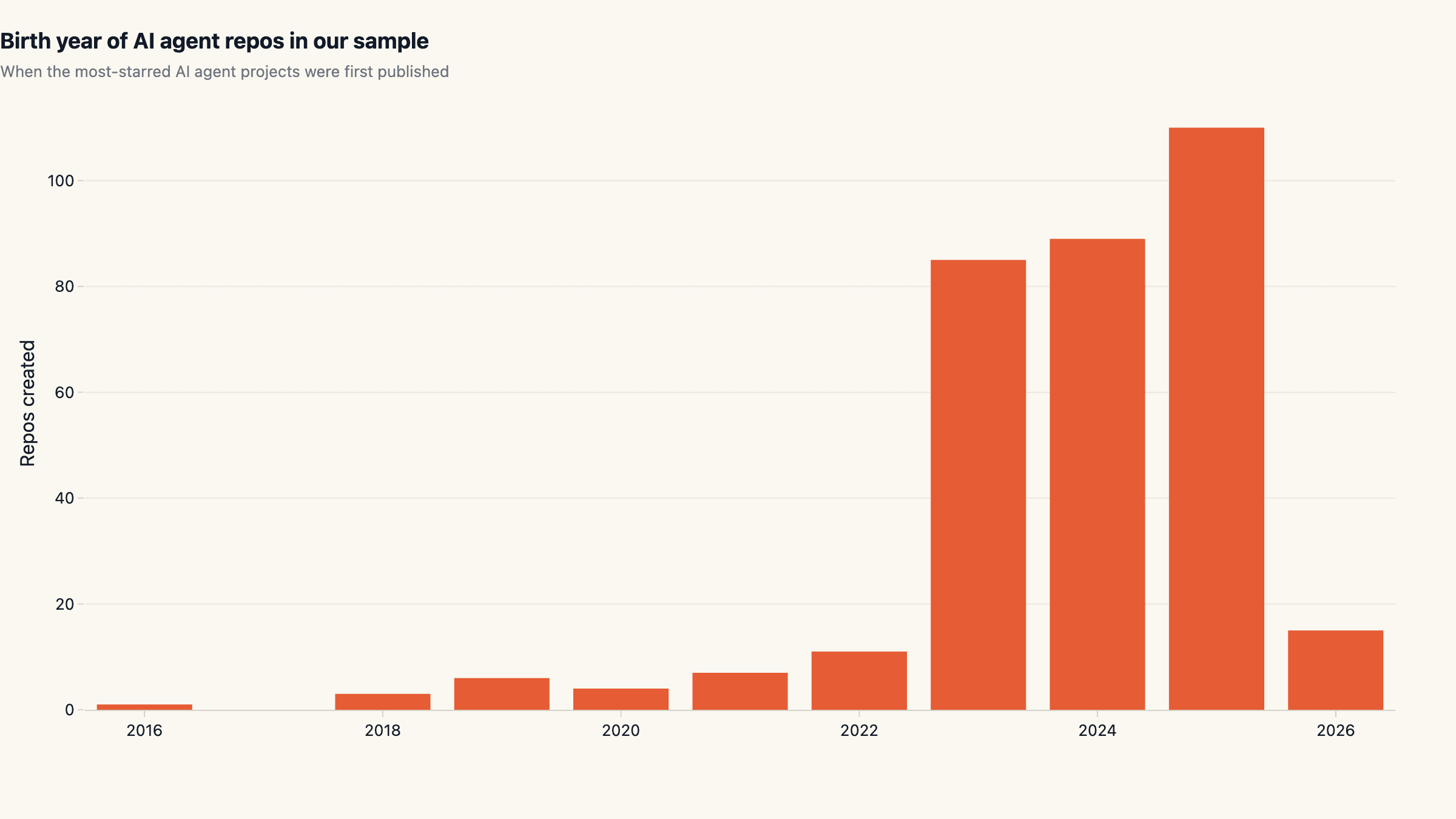

Cohort-bucketing by repo creation year shows the AI-agent topic took off after AutoGPT in 2023 (85 new repos), accelerated through 2024 (89), and peaked in 2025 with 110 new agent repos in our sample. Just under four months into 2026 we already see 15 new entries; tracking roughly flat year-over- year, suggesting the open source agent stack gold rush has stabilised rather than stalled.

Pre-2023 entries are almost entirely "agent" projects predating the LLM era (RL agents, multi-agent simulators) that have been re-tagged. The earliest in the dataset is from 2016 (one repo). The 2018-2021 cohort (20 repos) sits in the same category. These legacy entries inflate the topic-tagged count but do not represent the LLM-agent genre we usually mean by "AI agent".

The clean read is that the top ai agent repos category is a 2023-and-later phenomenon driven by AutoGPT, with a velocity shift in 2024 toward production tools and a 2025 broadening toward auxiliary tooling (skills indexes, CLI wrappers, MCP adapters). The 2026 cadence is consistent with steady compounding rather than the explosion we saw in 2023-2024.

What this means for picking an agent stack today

Three operating heuristics fall out of the data. First, do not pick by velocity rank alone. The top of the velocity list is heavily skewed toward awesome-list aggregators and skills indexes that ride viral waves. They are useful as discovery surfaces but they are not the agent stack. The cleanest production-relevant velocity examples are claude-mem, gemini-cli, firecrawl, and OpenHands.

Second, treat the absolute-stars top as the install-base ranking, not as a quality ranking. AutoGPT has 183K stars and genuine reputation, but its 2023 architecture lags the agent patterns mature teams converged on in 2024-2025. LangChain at 135K stars is the more battle-tested choice for orchestration; Dify at 139K stars is the strongest no-code/low-code orchestrator of the cohort. Pick the engine that fits your pattern rather than the one with the tallest bar.

Third, weight language carefully. Python wins on breadth,

but the JavaScript stack (Vercel ai, LangChain JS, browser-use

TS) gives you closer integration with web product surfaces. If

your agent ships inside a web app, the TypeScript-side has

caught up enough that the Python-with-thin-wrapper compromise is

no longer the default. See the AI SDK

landscape report for the install-

volume ranking on that side.

Teams that want to skip the framework-selection problem entirely can run almost any of these stacks behind a sandboxed agent runtime; that is the path maxgent is built around. For reference patterns and example deployments see the use-cases gallery.

What we are not measuring

Three caveats for readers planning to act on this. Stars are not usage. A repo with 100K stars and zero production deployments is qualitatively different from a repo with 10K stars and ten thousand active production users. The two cannot be separated from public GitHub data alone, and combining stars with download volume (npm, PyPI) gives a much sharper picture than either signal in isolation.

The topic search is incomplete. Our four queries cover the

most common conventions but miss topic:agentic-ai,

topic:autogpt, and several smaller community tags. A

wider-net version of this analysis is on the roadmap and will

surface roughly 600-700 unique repos rather than 380.

Awesome-list inflation is real and growing. The velocity top-15 includes at least four pure-aggregator repos that accumulate stars rapidly without representing buildable agent systems. Filtering them out would produce a cleaner production- focused velocity ranking; we kept them in for transparency about how the public ranking actually looks.

We will refresh this dataset every three months. The slug is permanent; subsequent versions will appear as updates rather than new URLs, so any backlinks to this report stay valid as the numbers evolve.

Cite this research

maxgent (2026). Top AI Agent Repos on GitHub: Star Velocity vs Total Stars in 2026. https://maxgent.ai/blog/top-ai-agent-repos-2026/

Charts and data are released under CC-BY 4.0. Please link back when reusing.

Dataset & charts

All data and charts released under CC-BY 4.0. Please link back when reusing.

Raw data

Embeddable charts

Want one of these charts on your blog or report? Click Copy embed next to any chart to copy a ready-to-paste HTML snippet (with attribution), then paste it into your page.

MoClaw Research publishes quarterly data studies on the AI tools ecosystem, drawing on public registries (npm, GitHub, Hugging Face, arXiv, Google Trends). All charts and datasets ship under CC-BY 4.0.

Ready to automate with AI?

MoClaw brings AI agents to the cloud. No setup, no coding required.

References: GitHub REST API: /search/repositories