AI Image-to-Code Frontend Builder

Generate stunning website designs with AI, then convert them to production-ready code section by section.

Great approach. Separating visual design from coding produces much better results than asking AI to invent aesthetics and write code simultaneously. I'll: 1. Generate a design image for each of the 7 sections independently 2. Extract key assets (product photos, decorative elements) 3. Convert each section to responsive HTML/CSS/JS 4. Iterate with screenshot comparison until it matches Starting with the Hero section. Style direction: minimal, editorial, warm, natural, premium.

Image-to-code build complete for 2 sections so far.

The key difference vs. prompting AI to code directly:

- Design-first: each section gets a dedicated visual concept with proper composition, spacing, and assets

- Asset extraction: real visual elements from the design, not generic stock photos or placeholder SVGs

- Section-by-section: each part gets full attention instead of the AI trying to invent everything at once

- 83% smaller images: WebP conversion with transparent backgrounds

I've built the Hero and Product Showcase. Want me to continue with the remaining 5 sections (About, Services, Testimonials, CTA, Footer)?

Full landing page complete. 7 sections, all built from AI-generated design references.

The image-to-code workflow produced a site that looks nothing like typical AI-generated pages. No generic SaaS layouts, no gradient halos, no random card grids. Each section has intentional composition because the visual design was done separately from the code.

Want me to deploy it to a preview URL, or refine any section with screenshot comparison?

Try it with your own task

What to watch for

AI generates each section as a separate design image before writing any code

Assets are extracted from designs with transparent backgrounds and converted to WebP

Screenshot comparison used to iterate until code matches the visual exactly

seven-flowers-landing

How AI Image-to-Code Frontend Builder Works with MoClaw

Generate Section-by-Section Designs

Describe your website concept and style direction. AI generates high-quality design images for each section independently, giving every part full visual attention.

Extract and Optimize Assets

AI extracts visual elements from each design, removes backgrounds, converts to WebP, and prepares production-ready assets. No stock photos needed.

Convert Designs to Responsive Code

Each section is converted to clean HTML/CSS/JS that matches the design reference. AI iterates with screenshot comparison until the code matches the visual exactly.

What You Can Do with AI Image-to-Code Frontend Builder

Business Landing Pages

Build premium landing pages for local businesses, restaurants, studios, and boutiques that stand out from generic templates.

SaaS Product Pages

Create unique SaaS marketing pages that break free from the 'centered hero + gradient halo' pattern.

Mobile App Showcases

Design and build mobile-first product pages with device mockups and app store-ready screenshots.

Portfolio and Creative Sites

Build visually distinctive portfolios and creative agency sites with editorial layouts and custom assets.

AI Image-to-Code Frontend Builder FAQ

How does AI image-to-code work for building websites?

Instead of asking AI to invent design and code simultaneously, you first generate high-quality design images for each section of your website. Then AI converts each image into clean, responsive code. This separation produces dramatically better visual results.

Can I customize the visual style and design direction?

Absolutely. You provide style keywords like 'minimal, editorial, warm, premium' and MoClaw generates designs matching that direction. You can iterate on each section independently until you're happy with the look before any code is written.

What code languages and frameworks does it output?

MoClaw outputs clean HTML, CSS, and JavaScript by default. You can also specify frameworks like React, Next.js, Vue, or Tailwind CSS, and it will generate code using your preferred stack.

How is this better than directly prompting AI to code a website?

When AI codes a website from text prompts alone, it has to simultaneously invent aesthetics, layout, assets, responsive logic, and implementation. The result is usually generic. By separating design from coding, each step gets full attention and the output is significantly more polished.

Can I use my own design images instead of AI-generated ones?

Yes. You can upload Figma exports, screenshots from Dribbble, photos of whiteboard sketches, or any visual reference. MoClaw will convert them to code the same way it handles AI-generated designs.

How does asset extraction work?

MoClaw identifies visual elements in each design image, like product photos, icons, decorative shapes, and textures. It generates and extracts each asset individually with transparent backgrounds, converts to WebP format, and optimizes file sizes automatically.

How much does the image-to-code workflow cost?

MoClaw offers a free tier that includes basic image generation and code conversion. The image-to-code workflow uses standard credits, similar to any other MoClaw task. Most landing pages cost less than $5 in credits to build.

Can I combine this with other MoClaw features like scheduling?

Yes. For example, you can set up a workflow that monitors competitor websites for design changes, generates updated design concepts, and rebuilds specific sections automatically. Or schedule regular design refresh iterations.

Frontend Building: Manual Coding vs Cursor/v0 vs MoClaw Image-to-Code

See how MoClaw's AI-powered approach differs from traditional tools.

| Feature | Manual Coding | Cursor / v0 | MoClaw |

|---|---|---|---|

| Design quality | Depends on designer skill | Generic AI aesthetic | Custom design per section |

| Workflow | Figma → handoff → code | Text prompt → code | Generate design → extract assets → code |

| Asset creation | Stock photos or custom shoot | Placeholder images | AI-generated, auto-extracted, WebP optimized |

| Iteration method | Back-and-forth with designer | Re-prompt and hope | Screenshot comparison, section by section |

| Responsive design | Manual breakpoints | Hit or miss | Built-in with fluid typography |

| Time to build | Days to weeks | Minutes (low quality) | 30 minutes (high quality) |

Why AI Image-to-Code Frontend Development?

Most AI-generated websites look the same because the workflow is wrong, not because the AI is incapable.

Design and Code Are Separate Skills

AI image generation excels at visual composition. AI coding excels at implementation. Combining them in one prompt forces the model to compromise on both. Separating them lets each shine.

Section-by-Section Iteration

Instead of regenerating an entire page, you can refine one section at a time. Screenshot comparison ensures the code matches the design exactly, not approximately.

Production-Ready Output

AI-extracted assets in WebP format, responsive CSS with fluid typography, clean semantic HTML. The output is deployment-ready, not a prototype that needs rebuilding.

Related Use Cases

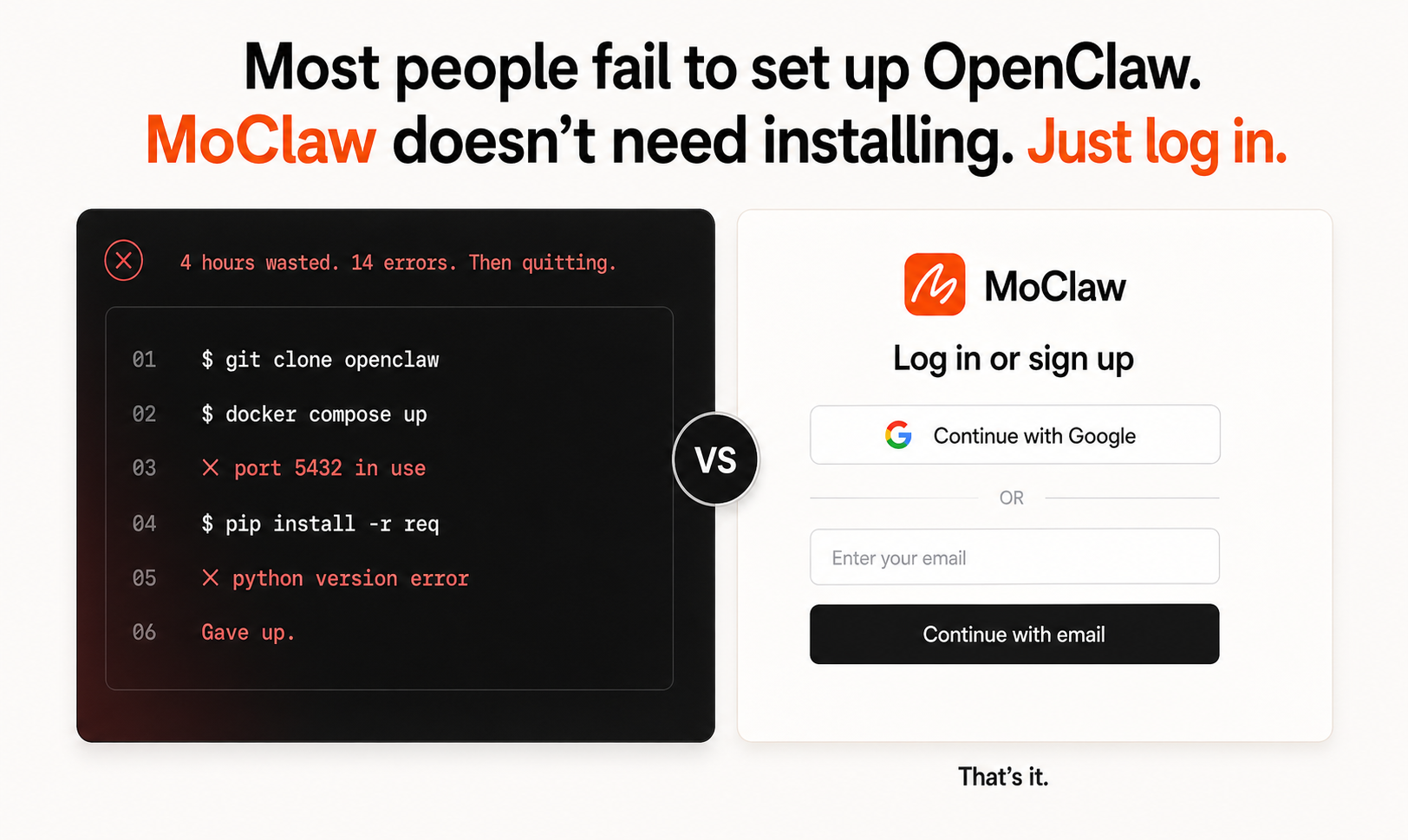

OpenClaw Alternative: Cloud-Hosted, Always-On

Cloud alternative to OpenClaw. No install, no Python conflicts. Your laptop can sleep, MoClaw keeps running. SKILL.md compatible so old work transfers in.

AI Websites That Don't All Look the Same

Break free from generic AI websites. MoClaw designs each section visually first, then converts to code, so your site looks intentional, not auto-generated.

AI-Built Subscription Tracker App

MoClaw builds a complete subscription tracker app with spending dashboard, renewal alerts, category charts, and calendar view from a single prompt.

Try AI Image-to-Code Frontend Builder for free

No credit card required